Jurgis Peters, Alternative Realities, 2022

Long before the military aggression against Ukraine had even started, the Kremlin was ramping up disinformation campaigns aimed at influencing public opinion inside and outside of Russia. Unsurprisingly, social media has been playing a key role in spreading mis- and disinformation about the invasion.

As a result, a large part of Russian citizens and Putin’s supporters across the world seem to live in an alternative reality, where Ukrainians are crying for help and Russian troops rush to their rescue. Russian soldiers themselves have been victims of the disinformation discourse. In the early days of the invasion (probably less so today) they went to war without perhaps fully realising the true geopolitical situation.

Jurgis Peters, Alternative Realities, 2022

Jurgis Peters, Alternative Realities, 2022. Splintered Realities. Press Conference. RIXC Art Science Festival 2022. Photos by Juris Rozenbergs

Artist Jurgis Peters has been looking into the true extent of disinformation on social media at the same time as he has been using these same social media to make emerge a more realistic “image” of Russian casualties. A kind of artistic challenge to Moscow’s reluctance to reveal the true number of Russian soldiers who lost their lives in the brutal invasion of Ukraine.

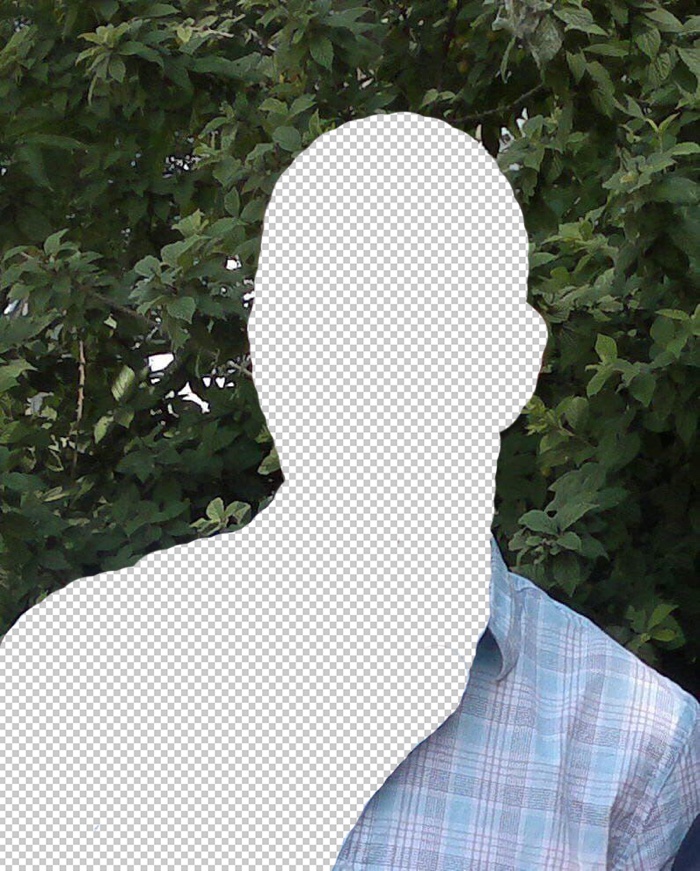

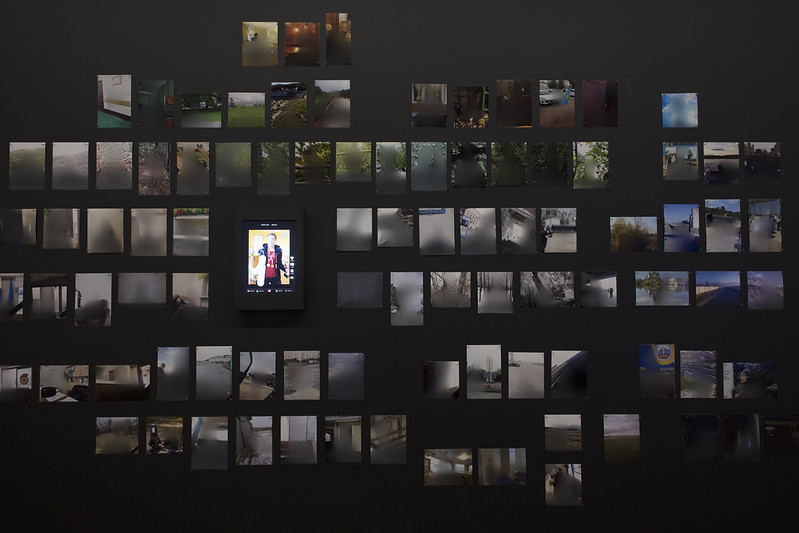

Alternative Realities installation consists of dozens of photos of fallen Russian army soldiers pinned on a black wall. The artist combed through Russian social media accounts to find the names and social media profiles of Russian soldiers who had died in Ukraine. He then used machine learning tools to meticulously erase the faces of the soldiers from these images (there is a small video on the project page that shows the process.) The men now look like ghosts. The paradox is that by cancelling their presence on the photo, Peters gives visibility to these fallen soldiers. He anonymises them and, at the same time, he points the finger at the Russian government’s failure to take responsibility for these deaths.

I discovered the work a couple of weeks ago while visiting the Splintered Realities exhibition of the RIXC Art & Science festival in Riga, Latvia. The festival explores a contemporary world fractured by an ongoing war, a pandemic, media ubiquity and other dividing factors.

I asked Jurgis Peters to take us through the development of his installation:

Jurgis Peters, Alternative Realities, 2022

Jurgis Peters, Alternative Realities, 2022. Splintered Realities. RIXC Art Science Festival 2022 Exhibition. Photos by Kristīne Madjare

Hi Jurgis! How did you get the idea for Alternative Realities?

Since the beginning of the Russian invasion of Ukraine, I have followed various news on the topic closely as well as have been conducting my own research using various social media platforms. And one thing that I was paying particular attention to was the role of new technologies and disinformation campaigns in this modern warfare. Having found many examples of such campaigns and seeing this weird, completely opposite alternative reality that the disinformation narrative has created, I knew that I want to talk about it via my work for the Splintered Realities festival.

If I remember correctly, you have a background in cybersecurity. How useful was it in order to detect disinformation and its techniques?

Yes, I do indeed have a background in cybersecurity and at the time when I was creating this piece, I was working for a governmental organisation that attempts to prevent cyber attacks. And as part of my day-to-day duties, I had to be on the lookout for any disinformation campaigns originating from Latvia. But as far as particular techniques in detecting disinformation campaigns, I would say that there are no trade secrets, so to speak, and that it is more of using common sense than a particular skill that I might have learned because of my background.

Jurgis Peters, Alternative Realities, 2022. Images with the erased soldiers

How important is the role of social media influencers in this disinformation war? And are there platforms where that disinformation machine is particularly active?

I would say that in a situation where legitimate, trustworthy information is not freely available, anything that contributes to this disinformation narrative is very dangerous because it only amplifies this idea of the alternative reality. But as for social media influencers – I think that they do play an important role because they have a large audience reach in particular groups of society that wouldn’t necessarily consume much of the traditional media (TV, Radio, Newspapers). This would be especially true with younger generations that get most of the news from social media platforms, such as TikTok.

And there also is the fact that at least for their followers, influencers might have more credibility than traditional news outlets. This is due to the influencer being seen more as a real person, or as a distant friend perhaps, whose opinion is valued amongst the followers.

As for the platforms where disinformation is particularly active – this would depend on what is the policy for combating the disinformation for each platform. We saw that at the beginning of the Russian invasion in Ukraine, Facebook along with Twitter, Instagram and YouTube were rather quick at flagging down the accounts that spread pro-Kremlin narratives and deleting the offending posts. TikTok, on the other hand, went in a different direction and cut off Russians from the rest of the world. As a result, people in Russia on TikTok could only see content generated from within Russia, this then meant that the platform was flooded with pro-Kremlin content which was hardly moderated by TikTok.

Telegram is another platform that has been heavily used to spread disinformation but also to access uncensored information. Because the chat groups in Telegram are private, there is no content moderation from app’s perspective (unless users themselves report the content).

How can people who don’t have your skills detect that an influencer is spreading warfare disinformation? Are there signs?

If a legitimate influencer (in this case a real person who has organic followers) starts to spread disinformation it might be hard to detect it unless you know for a fact that the information is false. The key thing would be to have access to trustworthy information that you can check to see if the facts mentioned by the influencer add up. A sign that there might be something wrong could be if the influencer is all of a sudden posting political content with a narrative that it hadn’t used before. And especially if these politically oriented posts start to appear frequently.

An interesting thing that was found in disinformation campaigns on TikTok, was that dozens of Russian influencers posted content where they were doing a TikTok trend, whilst reading the same pro-war propaganda script line by line. So I guess this would be another sign to watch out for – having different influencer accounts repeating the same political message.

Jurgis Peters, Alternative Realities, 2022. Opening of the Splintered Realities exhibition. RIXC Art Science Festival 2022. Photos by Kristīne Madjare

How did you find the identity of fallen Russian soldiers? Not even Russians know exactly how many casualties this war is creating…

It took some considerable time to find the photos of the fallen soldiers. At first, I was able to find multiple lists of the dead soldiers that were published in various VK (“V Kontakte” – a Russian version of Facebook) accounts and also some lists that were accessible from the Russian search engine Yandex. This was before it was prohibited by criminal law to publish any information about the casualties of war in Russia. But with these lists, in most cases, there was only a name and a surname for the soldier and no photograph. It was quickly clear that by only using their name and surname I can’t be sure to find the photographs of the person in question, since dozens of people could have the same name and surname.

So what I did was that I kept searching for a reliable source of information that could also provide the photographs. I did find such a source in the Telegram group that loosely translates to “Look for Your Own”. This group was created by Ukrainians for the mothers of Russian soldiers so that they could find out if their sons have been killed in Ukraine. In the first months of the war, the group was very active featuring images of the fallen soldiers that were mainly taken from their social media accounts. I was able to download all the images that have been shared on the group and that served as the basis for my work.

The photos of the soldiers come from social media. Most of the portraits are very informal. How did you select the ones you used? Were there specific criteria?

I guess the main criteria was that the images were of decent quality and size. Usually, there was only one image per person so, in that sense, I did not have to decide which image to use.

Jurgis Peters, Alternative Realities, 2022. Images with the erased soldiers

Your installation at the Splintered Realities festival looks like a mosaic of small portraits on a black wall. In the middle, a screen shows the erasure process. Can you explain how the erasure works? What type of algorithm did you use?

To clean up the image I use an inpainting service that utilises a so-called LaMa (Resolution-robust Large Maska Inpainting with Fourier Convolutions) machine learning model. For this project, I have altered the original web implementation to suit my needs. Basically, what the inpainting service does – it allows you to paint over a portion of an image, this painted or deleted portion is then filled with what AI thinks should be left there when the object is removed.

Are you planning to work on similar projects or themes in the future?

Since the beginning of the Russian invasion, I had that urge to speak out about it and with this work being completed, I feel that I have had my opportunity to express my opinion. So I feel that at least for now, I want to focus on some other themes that are perhaps not as heavy. But I do believe that at some point I will come back to working with other aspects of disinformation.

Thanks Jurgis!

The RIXC Art Science Festival 2022 under the title SPLINTERED REALITIES takes place in Riga and virtually. The Festival Program includes the EXHIBITION (August 25 – October 16, 2022 / Kim? Contemporary Art Center) and the CONFERENCE (October 6–8, 2022 / Hybrid: Virtual / Riga), featuring Deep Europe Symposium, the 5th Renewable Futures conference and Live Sessions from Liepaja, Karlsruhe and Oslo.