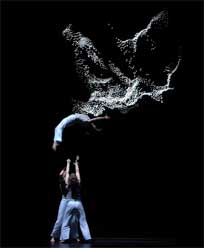

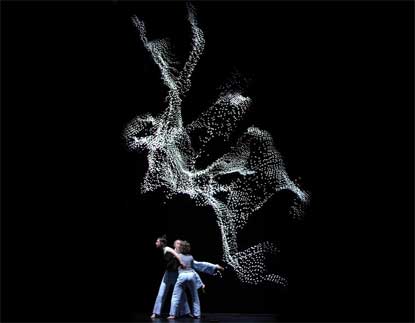

UnitedVisualArtists have just uploaded on their website a video of their latest project, Echo.

The 8-minute live performance piece was commissioned by Vamp and produced in collaboration with Mimbre for a launch in the Turbine Hall, Tate Modern in London.

I found the video so beautiful that i pestered UVA with questions. Ash Nehru kindly answered me.

What’s the technology behind Echo?

The LED screen we used was a Lighthouse R10 LED screen, 8m wide by 11m high. We used the Point Grey Labs’ Bumblebee2 stereo camera system, mounted at the foot of the stage, and used our own proprietary software (dragonfly3) to render the resulting 3D point-cloud in real time. The motion of the ‘virtual camera’ was scripted within D3.

How about the collaboration with the dancers, Mimbre and choreographer Flick Ferdinando? How did it go?

Because we had very little rehearsal time (5 days), the dancers assembled the performance from sequences taken from their existing show, but simplified and slowed down to create a dreamlike, ‘sculptural’ effect. We set up the bumblebee system so that the choreographer could select moves that worked best for the camera.

Aside from offering our opinions as to which music worked best with which moves, we exercised as little control over the choreography as possible.

Once the choreography was fixed, we recorded the performance to create a 3D video file, which was then used to sequence the camera moves.

The idea for the performance came from our original work with the bumblebee system, an interactive installation called Mirror that we presented in the Kemistry gallery in horeditch, London. The particular rendering style we used for that project was developed slightly to work better with the LED screen.

Thanks Ash!

If you’re in Northern Europe, Austin or Los Angeles, chances are that you can catch up with the work of UnitedVisualArtists, they are currently touring with Massive Attack. As Jose Luis commented: you will also be able to enjoy UVA’s own live show next October in Barcelona at ArtFutura 2006.

More Interview of UnitedVisualArtists; their video for Colder.