Fernando Orellana and Brendan Burns have collaborated on a new art work which investigates one of the possible human-robot relationships.

Using recorded brainwave activity and eye movements during REM (rapid eye movement) sleep to determine robot behaviors and head positions, “Sleep Waking” acts as a way to “play-back” dreams.

I asked Fernando to give us more details about the robot:

How does Sleep Waking work exactly?

I spent a night at The Albany Regional Sleep Disorder Center in Albany, NY. There they wired me up with a variety of sensors, recording everything from EEG to EKG to eye positioning data. We then took that data and interpreted it in two ways:

The eye position data we simply apply to the position the robot’s heads is looking. So if my eye was looking left, the robot looks left.

The use of the EEG data is a bit more complex. Running it through a machine learning algorithm, we identified several patterns from a sample of the data set (both REM and non-REM events). We then associated preprogrammed robot behaviors to these patterns. Using the patterns like filters, we process the entire data set, letting the robot act out each behavior as each pattern surfaces in the signal. Periods of high activity (REM) where associated with dynamic behaviors (flying, scared, etc.) and low activity with more subtle ones (gesturing, looking around, etc.). The “behaviors” the robot demonstrates are some of the actions I might do (along with everyone else) in a dream.

We also use robot vision for navigation and keeping the robot on its pedestal. This camera is mounted about three feet above the robot and it not shown in the documentation.

Video:

What do you think the robot can bring to our understanding of possible human-robot relationships?

Sleep Waking is a metaphor for a reality that could be in our future. In the piece we use a fair amount of artistic license. Though the eye positioning data is a literal interpretation, what we do with the EEG data is a bit more subjective. However, perhaps one day we will have the technology to literally allow a robot to act out what we do in our dreams. What could we learn from seeing our dreams played back for us? Will we save our dreams like we save our photographs?

Taking a wider view, robots are increasingly used to augment human experience. From robotic prosthetic devices, personalized web presences, and implanted RFID chips, technology is moving from being an externalized tool, to being a literal extension of who we are. By giving an example of and drawing attention to this process. We hope to give people the opportunity to think critically what personalized technology actually means.

Did you use an existing robot or did you build it from scratch?

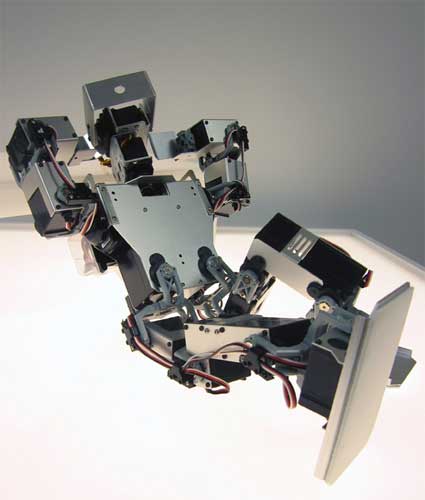

We used a modified Kondo KHR-2HV humaniod robot. In the next iteration of this piece, we will be fabricating my own design for a humanoid robot.

Thanks Fernando!

See Sleep Waking at the BRAINWAVE: Common Senses exhibition which opens on February 16 at Exit Art.

Another of Fernando’s work, 8520 S.W.27th Pl. v.2, is still on view at the Emergentes exhibition at the LABoral center in Gijon, Spain until May 12, 2008.

![7 art and tech ideas I discovered at Meta.Morf 2024 – [up]Loaded Bodies 7 art and tech ideas I discovered at Meta.Morf 2024 – [up]Loaded Bodies](https://we-make-money-not-art.com/wp-content/uploads/2024/05/53705969154_73dfdfea6f_c-300x200.jpeg)