James Bridle, Untitled (Autonomous Trap 001)

Tesla customers who want to take advantage of its cars AutoPilot mode are required to agree that the system is in a “public beta phase”. They are also expected to keep their hands on the wheel and “maintain control and responsibility for the vehicle.”

Almost a year ago, Joshua Brown was driving on the highway in Florida when he decided to put his Tesla car into self-driving mode. It was a bright Spring day and the vehicle’s sensors failed to distinguish a white tractor-trailer crossing the highway against a bright sky. The car didn’t brake and Brown was the first person to die in a self-driving car accident.

Autonomous cars have since been associated with a growing number of errors, accidents, glitches and other malfunctions. Interestingly, human trust in these technologies doesn’t seem to falter: we assume that the technology ‘knows’ what it is doing and are lulled into a false sense of safety. Tech companies are only too happy to confirm that bias and usually blame the humans for any crash or flaw.

James Bridle, Autonomous Trap 001 (Salt Ritual, Mount Parnassus, Work In Progress), 2017

James Bridle, Installation view of Failing to Distinguish Between a Tractor Trailer and the Bright White Sky at Nome Gallery, Berlin, 2017. Photo: Gianmarco Bresao

James Bridle‘s solo show Failing to Distinguish Between a Tractor Trailer and the Bright White Sky, which recently opened at NOME project in Berlin, explores the arrival of technologies of prediction and automation into our everyday lives.

The most discussed work in the show is a video showing a driverless car entrapped inside a double circle of road markings made with salt. The vehicle, seemingly unable to make sense of the conflicting information, barely moves back and forth as if under the spell of a mysterious force.

The work demonstrates admirably the limitation of machine perception, the pitfalls of a technology which inner working and logic is completely opaque to us, the difference between human and machine comprehension, between accuracy and reliability.

I sometimes wonder how aware most of us really are of the impact that self-driving vehicles will have on our life: soon we might not be able to read maps not just because GPS have made that skill superfluous but because these maps will be unintelligible to us; we might even be seen as too unreliable behind a wheel and be forbidden to drive cars (we’ll have sex instead apparently.)

Taking as their central subject the self-driving car, the works in the exhibition test the limits of human knowing and machine perception, strategize modes of resistance to algorithmic regimes, and devise new myths and poetic possibilities for an age of computation.

It feels strangely ominous to write about autonomous machines on the 1st of May, a day celebrated as International Workers’ Day. After all, these smart systems are going to ‘put us out of job‘. And truck drivers, taxi drivers, delivery drivers are among the professions which will be hit first.

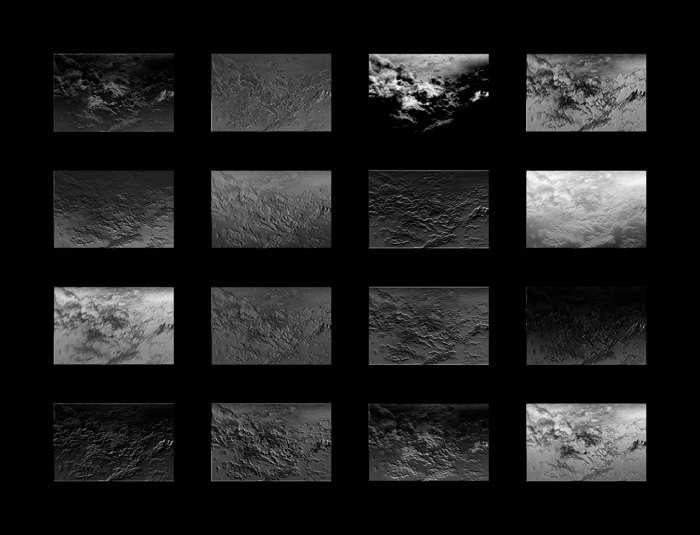

James Bridle, Untitled (Activated Cloud), 2017

I asked the artist, theorist and writer to tell us more about the exhibition:

Hi James! I had a look at the video and not a lot is happening once the car is inside the circle. Which is exactly what you wanted to show of course. But for all i know, the machine could have stopped to work just because it never worked as an autonomous vehicle in the first place and you could be hiding inside making it move a bit. Could you explain what the machine sees and what causes the car to stall?

The car in the video is not autonomous. My main inspiration for the project was in understanding machine learning, and the system I developed – based on the research and work of many others – was entirely in software. I kitted out a regular car with cameras and sensors – some off the shelf, some I developed myself – and drove it around for days on end. This data is then fed into a neural network, a kind of software modelled originally on the brain itself, which learns to make associations between the datapoints: knowing the kind of speed, or steering angle, which should be associated with certain road conditions, it learns to reproduce them.

I’m really interested in this kind of AI which instead of attempting to describe all the rules of the world from the outset, develops them as a result of direct experience. The result of this form of training is both very powerful, and sometimes very unexpected and strange, as we’re becoming aware of through so many stories about AI “mistakes” and biases. As these systems become more and more embedded in the world, i think it’s really important to understand them better, and also participate in their creation.

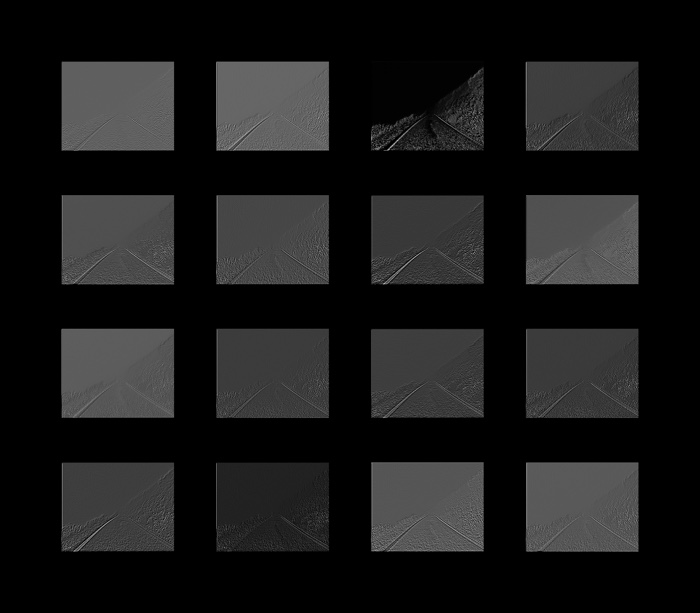

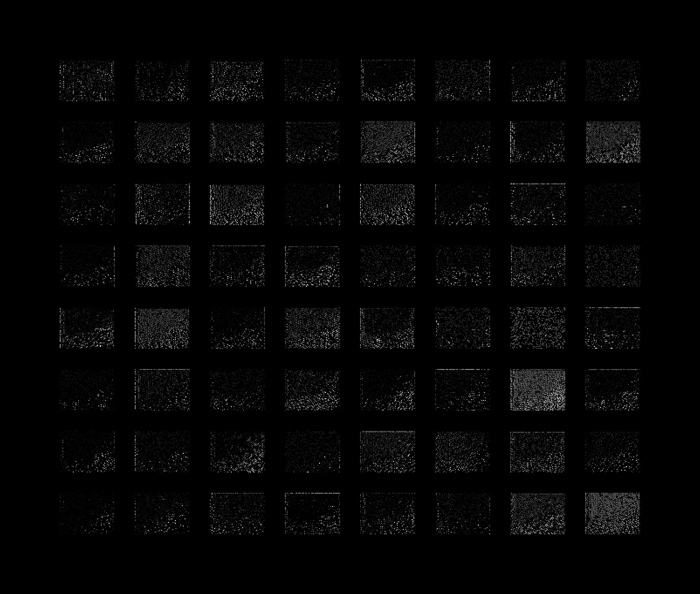

My software is developed to the point where it can read the road ahead, keep to its lane, react to other vehicles and turnings – but in a very limited way. I certainly would not put my life in its hands, but it does give me a window into the way in which such systems function. In the Activations series of prints in the exhibition, which show the way in which the machine translates incoming video data into information, you can see the things highlighted as most significant: the edges of the road, and the white lines which direct it. Any machine trained to obey the rules of the road would and should obey the “rules” of the autonomous trap because it’s simply a no entry sign – but whether such rules are included in the training data of the new generation of “intelligent” vehicles is an open question.

James Bridle, Untitled (Activation 002), 2017

James Bridle, Untitled (Activation 004), 2017

It is a bit daunting to realise that a technology as sophisticated as a driverless car can be fooled by a couple of kilos of salt. In a sense your role fulfills the same role as the one of hackers who enter a system to point to its flaws and gaps and thus help the developers and corporations to fix the problem. Have you had any feedback from people in the car industry after the work was published in various magazines?

The autonomous trap is indeed a potential white hat or black hat op. In machine learning, this might be called an “adversarial example” – that is, a situation deliberately engineered to trick the system, so it can learn from and defend against such tricks in the future. It might be useful to some researcher, I don’t really know. But as I’m interested in the ways in which machine intelligence differs from human intelligence, I’ve been following closely many techniques for generating adversarial examples – research papers which show, for example, the ways in which image classifiers can be fooled either with entirely bizarre random-looking images, or with images that, to a human, are indistinguishable. What I like about the trap is that it’s an adversarial example that sits in the middle – that is recognisable to both machine and human senses. As a result, it’s both offensive and communicative – it’s really trying to find a middle or common ground, a space of potential cooperation rather than competition.

You placed the car inside a salt circle on a road leading to Mount Parnassus (instead of on a car park or any other urban location any artist dealing with tech would do!). The experiment with the autonomous car is thus surrounded by mythology, Dyonisian mysteries and magic.Why do you embed this sophisticated technology into myths and enigmatic forces?

The mythological aspects of the project weren’t planned from the beginning, but they have been becoming more pronounced in my work for some time now. While working on the Cloud Index project last year I spent a lot of time with medieval mystical texts, and particularly The Cloud of Unknowing, as a way of thinking through other meanings of “the cloud”, as both computer network and way of knowing.

In particular, I’m interested in a language that admits doubt and uncertainty, that acknowledges that there are things we cannot know yet must take into account, in a way that contemporary technological discourse does not. This seems like a crucial form of discourse for an interconnected yet increasingly complex and fragmented world.

In the autonomous car project, the association with Mount Parnassus and its mythology came about quite simply because I was driving around Attica in order to train the car, and it’s pretty much impossible to drive around Greece without encountering sites from ancient mythology. And this mythology is a continuous thread, not just something from the history books. As I was driving around, I was listening to Robert Graves’ Greek Myths, which connects Greek mythology to pre-Classical animism and ritual cults, as well as to the birth of Christianity and other monotheistic religions. There’s a cave on the side of Mount Parnassus which was sacred, like all rustic caves, to Pan, but has also been written about as a hiding place for the infant Zeus, and various nymphs. The same cave was used by Greek partisans hiding from the Ottoman armies in the nineteenth century and the Nazis occupiers in the twentieth, and no doubt on many other occasions throughout history – there’s a reason those stories were written about that place, and the writing of those stories allowed for that place to retain its power and use. Mythology and magic have always been forms of encoded and active story-telling, and this is what I believe and want technology to be: an agential and inherently political activity, understood as something participatory, illuminating, and potentially emancipatory.

James Bridle, Installation view of Failing to Distinguish Between a Tractor Trailer and the Bright White Sky at Nome Gallery, Berlin, 2017. Photo: Gianmarco Bresao

James Bridle, Installation view of Failing to Distinguish Between a Tractor Trailer and the Bright White Sky at Nome Gallery, Berlin, 2017. Photo: Gianmarco Bresao

Your practice as an artist and thinker is widely recognised so i suspect that you could have knocked on the door of Tesla or Volkswagen and get an autonomous car to play with. Why did you find it so important to build your own self-driving car?

I think it’s incredibly important to understand the medium you’re working with, which in my case was machine vision and machine intelligence as applied to a self-driving car – something that makes its own way in the world. By understanding the materiality of the medium, you really get a sense of a much wider range of possibilities for it – something you will never do with someone else’s machine. I’m not really interested in what Tesla or VW want to do with a self-driving car – although I have a fairly good idea – rather, I’m interested in thinking through and with this technology, and proposing alternative pathways for it – such as getting lost and therefore generating new and unexpected experiences, rather than ones pre-programmed by the manufacturer. Moreover, I’m interested in the very fact that it’s possible for me to do this, and for showing that it’s possible, which is itself today a radical act.

I believe there’s a concrete and causal relationship between the complexity of the systems we encounter every day, the opacity with which most of those systems are constructed or described, and fundamental, global issues of inequality, violence, populism and fundamentalism. Only through self-education, self-organisation, and new forms of systemic literacy can we counter these currents: programming is one form of systemic literacy, demonstrating the accessibility and comprehensibility of these technologies is another.

The salt circle is associated with protection. Do you think our society should be protected from autonomous vehicles?

In certain ways, absolutely. There are many potential benefits to autonomous vehicles, in terms of road safety and ecology, but like all of our technologies there’s also great risk, particularly when control of these vehicles is entirely privatised and corporatised. The best model for an autonomous vehicle future is basically good public transport – so why aren’t we building that? At the moment, the biggest players in autonomous vehicles are the traditional vehicle manufacturers – hardly beacons of social or environmental responsibility – and Silicon Valley zaibatsus such as Google and Uber, whose primary motivation is financialising virtual labour until they develop AI which can cut humans out of the loop entirely. For me, the autonomous vehicle stands in most particularly for the deskilling and automation of all forms of labour (including, in Google’s case, cognitive labour), and as such is a tool for degrading individual and collective agency. This will happen first to truck and taxi drivers, but will slowly extend to most of the workforce which, despite accelerationist dreams, is currently shredding rather than building a social framework which might support a low-work future. So, looked at that way, the corporate-controlled autonomous vehicle and automation in general is absolutely something that should be resisted, while it fails to serve the interest of most of the people it effects.

In all things, technological determinism – the idea that a particular outcome is inevitable because the technology for it exists – must be opposed. Knowing where the off switch is a vital and necessary complement to the kind of democratic involvement in the design process described above.

The artist statement in the catalogue of the show says that you worked with software and geography. I understand the necessity of the software but geography? What was the role and importance of geography in the project? How did you work with it?

The question which I kept returning to while working on the project, alongside “what does it mean for me to make an autonomous car?” is “what does it mean to make it here?” – that is, not on a test track in Bavaria or a former military base in Silicon Valley, but in Greece, a place with a very different material history and social present. How does a machine see the world when its experience is of fields, mountains, and winding tracks, rather than Californian highways and German autobahns? What is the role of automation in a place already suffering under austerity and unemployment – but which also has always produced its own, characteristic responses to instability? One of the things I find fascinating about the so-called autonomous vehicle is that, in comparison to the traditional car, it’s really as far from autonomous as you can get. It must constantly return to the network, constantly update itself, constantly observe and learn from the world, in order to be able to operate. In this way, it also seems to embody some potentially more connected and more community-minded world – more akin to some of the social movements so active in Greece today than the atomised, alienated passengers of late capitalism.

James Bridle, Gradient Ascent, 2016

James Bridle, Gradient Ascent, 2016

In the video and catalogue text entitled “Gradient Ascent”, Mount Parnassus and the journeys around it becomes an allegory both for general curiosity, and for specific problem-solving: one of the precise techniques in computer science for maximising a complex function is the random walk. Re-instituting geography within the domain of the machine becomes one of the ways of humanising it.

I was reading on Creators that this is just the beginning of a series of experiments for the car. Do you already know where you will go next with the technology?

I’m still quite resistant to the idea of asking a manufacturer for an actual vehicle, and for now my resources are pretty limited, but it might be possible to move onto the mechanical part of the project in other ways – I’ve had some interest from academic and research groups. I think there’s lots more to be done in exploring other uses for the autonomous vehicle – as well as questions of agency and liability. What might autonomous vehicles do to borders, for example, when their driverless nature makes them more akin to packets on a borderless digital network? What new forms of community, as hinted above, might they engender? On the other hand, I never set out to build a fully functioning car, but to understand and think through the processes of developing it, and to learn from the journey itself. I think I’m more interested in the future of machine intelligence and machinic thinking than I am in the specifics of autonomous vehicles, but I hope it won’t be the last time I get to collaborate with a system like this.

Thanks James!

James Bridle’s solo show Failing to Distinguish Between a Tractor Trailer and the Bright White Sky is at NOME project in Berlin until July 29, 2017