Remember that Tuesday post? I was sending you to London on a mission to visit Constructing Realities, an exhibition that showcases the best work from the Postgraduate Certificate Course in Advanced Architectural Research, at the Bartlett School of Architecture, UCL. I also promised i’d come back with more projects from the show.

This one is, imho, equally as fascinating as The Fortress of Senses but it is also strikingly different. Subverting the LiDAR Landscape: Tactics of spatial redefinition for a digitally empowered population is a speculative project which questions the way we interact with digital and physical versions of our cities.

The project is based around LiDAR technology – 3D scanning but on a city scale. Google Earth and Streetview have now become people’s most trusted tool for exploring and researching urban space. Moreover, the tools are now taken as virtual fact by a global internet population. They will soon be replaced by intricate 3D modeled versions of our cities derived from mobile 3D scanning units – LiDAR equipped vehicles.

Matthew Shaw’s project aims to subvert this mapping, by arming the population with the tools to edit the way their city is scanned and recorded. These tools are not digital hacks but physical interventions. They manipulate the scanning process and act as waypoints and markers linking the physical world to the digital.

I’m leaving you with Matthew’s description of the project:

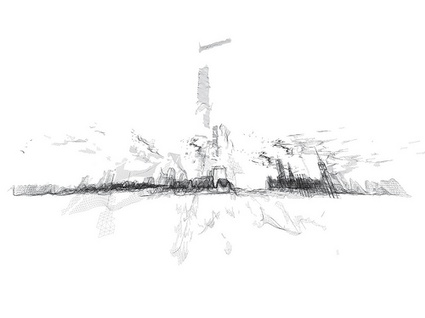

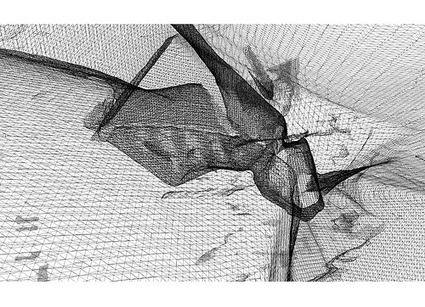

A subverted scan of London

A subverted scan of London

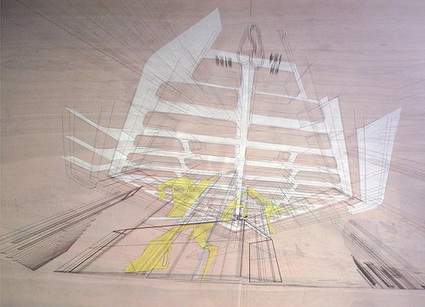

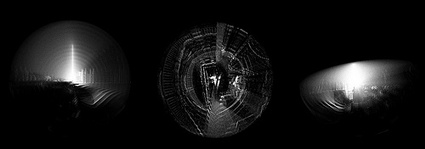

The Surveillance series are drawings that explore the city from stealth locations. They see what a LiDAR unit sees, what through wall radar can sense, what an IRA bomber may have thought, what AL-Qaida may be watching. They hide, see through walls, bend light and look round corners.

Surveillance

Surveillance

Surveillance

Surveillance

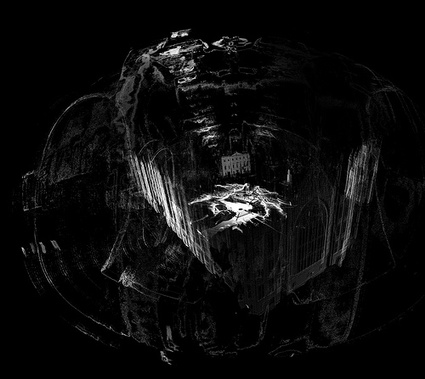

The Scan series are hybrid landscapes of real and imagined LiDAR data. They take actual 3D scans of the parliament area of London and breed them with speculative LiDAR blooms, blockages, holes and drains. These are the result of strategically deployed devices which offset, copy, paste, erase and tangle LiDAR data around them. They show the route of stealth drills carving LiDAR data in the public redecoration zone. They show boundary miscommunication devices – hotspots of spatial truths and mistruths. They show the deployment of flash architecture and toolpaths of stealth mechanics. Parliament is offset to St. James Park; protestors shelter under a LiDAR shield on the Mall, an urban transplant replaces Downing Street with an insurgent gateway and a Huas-MattaClarkian vista.

Scan

Scan

Scan

Scan

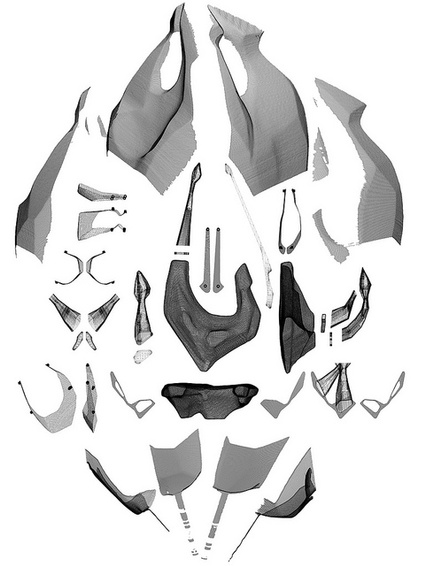

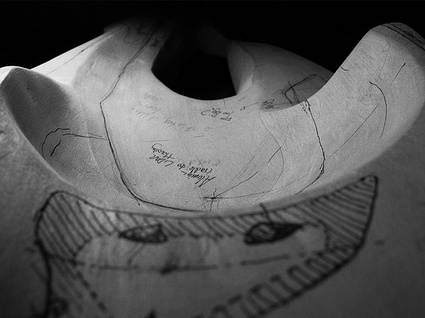

A series of prototypical objects explore the form and materiality of stealth and subversion. Each object starts life as an intuitively carved wooden sketch. These then become 3D notebooks on which to design precise insertions and additions. The objects are then 3D scanned using a self built scanner to enable precision inserts to be machined and added to the originals. These objects are then scanned and their digital siblings cast and machined from the scanned data.

Prototype

Prototype

Prototype

Prototype

Prototype

Prototype

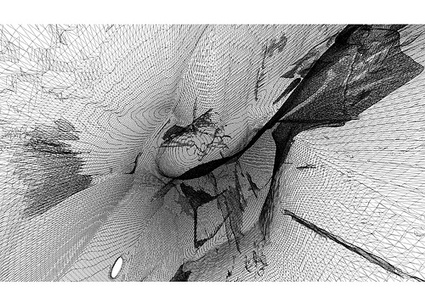

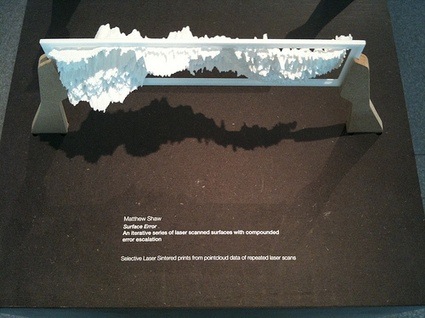

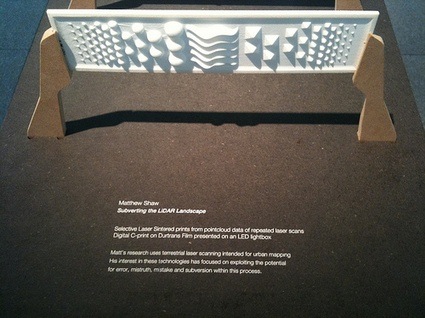

The Surface Error series compounds the slight errors implicit in the scanning process and shows the distortion, mistruth and beauty that repeated error can create. A base SLS printed target is repeatedly scanned, 3D printed and re-scanned for 12 iterations. This micro test of distortion could be applied on a city scale, altering its digital appearance .

Surface Error

Surface Error

Surface Error

Surface Error

The Parliament series is made of subverted terrestrial laser scans and their respective tools, tool paths and deployment diagrams.

Scans taken in Westminster, London between 7:23pm on June 3 and 11.56pm on June 17 showing pointcloud data collected near the Houses of Parliament. The facade of Parliament is visible in a swarming clouds of scanned noise and subverted data. These mistruths are engineered through a series of strategically placed disruptive objects positioned in the scan path.

—

[The first three series of works are from the masters project 2008/09 and hypothesise the subversion of large scale terrestrial laser scanning. The final two series test these ideas using a £70k Faro Photon 120 terrestrial laser scanner on loan to the Bartlett from the manufacturers. The scanner is capable of scanning 360 degrees of intricate 3D data in full colour and up to a distance of 150 meters. This research is continuing along with other scanning projects as part of ScanLAB@theBartlett, more info to be revealed shortly!]

For further information please contact matthew.shaw at ucl.ac.uk.

Thanks Matthew!

The exhibition Constructing Realities runs until October, 1st at PHASE 2 Gallery, 8 Fitzroy St, London W1T 4BJ (map.)

Related: Book Review: Digital Architecture – Passages Through Hinterlands.